Table of Contents

Document Status: Abstract proposed November 26, 2018 and revised based on reviewer feedback February 2019. The final conference abstract is available online at http://jubilees.stmarytx.edu/thanneken/2019/Hanneken_2019_DeepDigitization.html and through the conference website. The conference paper itself was composed starting May 27, 2019 and achieved its final form prior to the oral presentation on July 10, 2019. The paper exists in a long form intended for written media and a short form designed to be read within twenty minutes at the conference. The portions from the written paper excluded from the oral presentation are indicated in dark red type. The portions added to the oral presentation are indicated in dark blue type. The formatting for web distribution was completed on August 2, 2019. The footnotes were expanded on October 26, 2019.

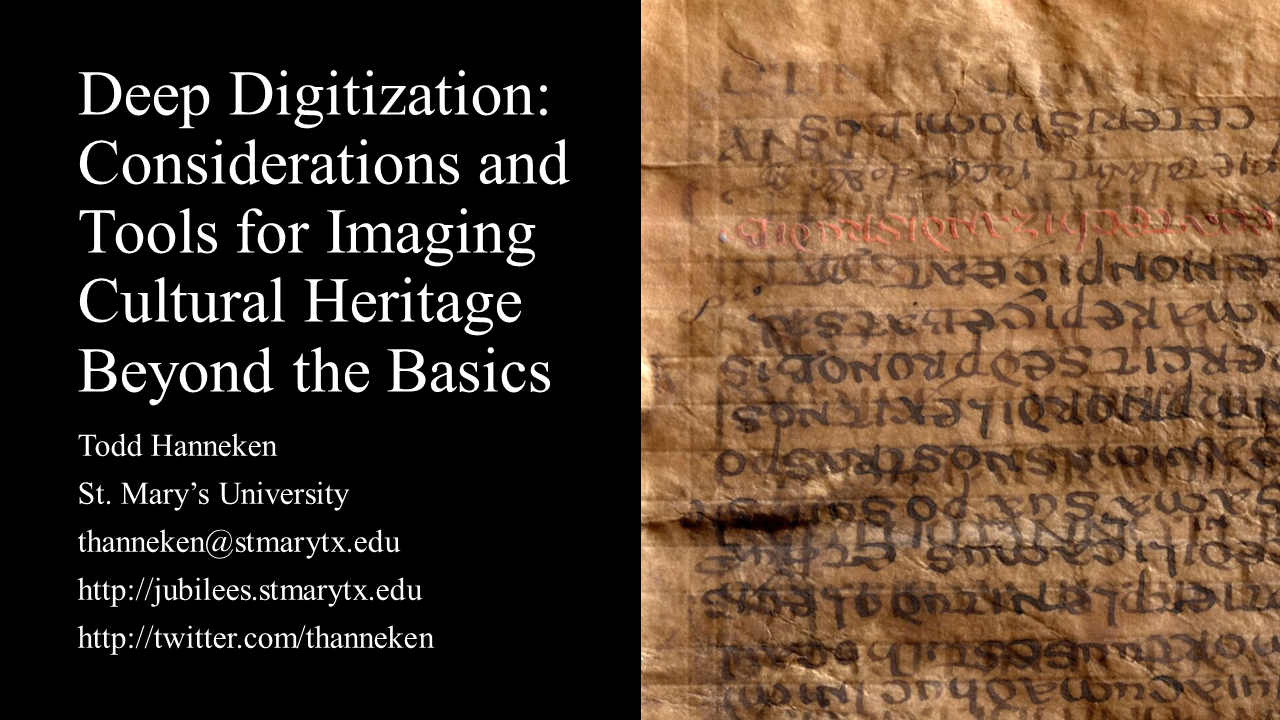

Today I hope to elevate the debate about digitization of manuscripts and other artifacts in two ways. First, I will offer some theoretical discussion about how the terminology of the conversation could be more precise. Second, I will introduce some new tools recently developed and made public through research supported by the U.S. National Endowment for the Humanities. Grant numbers HD-51709-13 and HK-250616-16.

Much discussion has surrounded the growing number of manuscripts available online in digital facsimile.

There are staunch supporters who celebrate every digitization project,

and there are skeptics who question the impact on scholarship of digital mediation replacing first-hand inspection of artifacts.

See especially the September 2018 special issue of Archive Journal, “Digital Medieval Manuscript Cultures.”

http://www.archivejournal.net/essays/digital-medieval-manuscript-cultures/.

The introduction by the editors, Michael Hanrahan and Bridget Whearty, explains the structure of the special issue as a “self-conscious arc”

beginning with “careful critiques of digital manuscripts.”

First among these is A. S. G. Edwards, “The Digital Archive, Scholarly Enquiry, and the Study of Medieval English Manuscripts.”

The second, Andrew Prescott and Lorna Hughes, “Why Do We Digitize? The Case for Slow Digitization,” is more optimistic but calls for caution.

Other essays in the special issue address the potential of digitization more enthusiastically.

See especially

Johanna Green, “Digital Manuscripts as Sites of Touch,”

and Julia Craig-McFeely, “Recovering Lost Texts: Rebuilding Lost Manuscripts.”

A similar range of enthusiasm and caution can be found in the

open-access third volume in the series Digital Biblical Studies from Brill.

This volume was edited by David Hamidović, Claire Clivaz and Sarah Bowen Savant

and titled Ancient Manuscripts in Digital Culture: Visualisation, Data Mining, Communication

(https://doi.org/10.1163/9789004399297).

See especially Lied, “Digitization and Manuscripts as Visual Objects: Reflections from a Media Studies Perspective.”

Speaking explicitly of conventional digitization, she notes insightfully the ways in which the digital simulation is not to be equated with the simulated.

Stronger caution appears in

Brent Landau, Adeline Harrington and James C. Henriques,

“‘What No Eye Has Seen’: Using a Digital Microscope to Edit Papyrus Fragments of Early Christian Apocryphal Writings.”

They assert that “there is absolutely nothing that replaces having the actual physical manuscript present for observation with the naked eye.”

It should be noted, however, that the context of their article is not the theoretical principles of digitization, but a 1.3 megapixel digital microscope.

For more critical reflection on the role of digital technology in the study of biblical manuscripts see also the introduction by two of the editors.

I take as a point of departure the basic observation that a digital surrogate is a distinct artifact, not to be simply equated with the original.

I will argue that digitization is not a thing, but a range of possibilities.

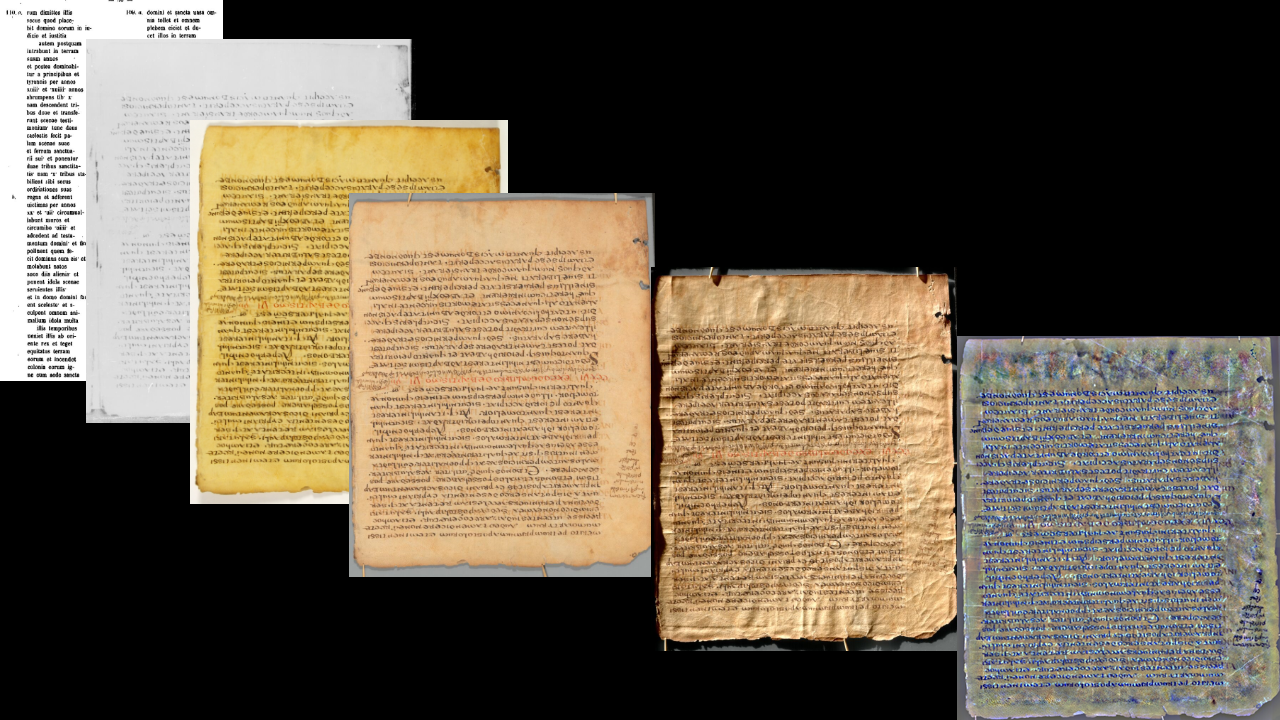

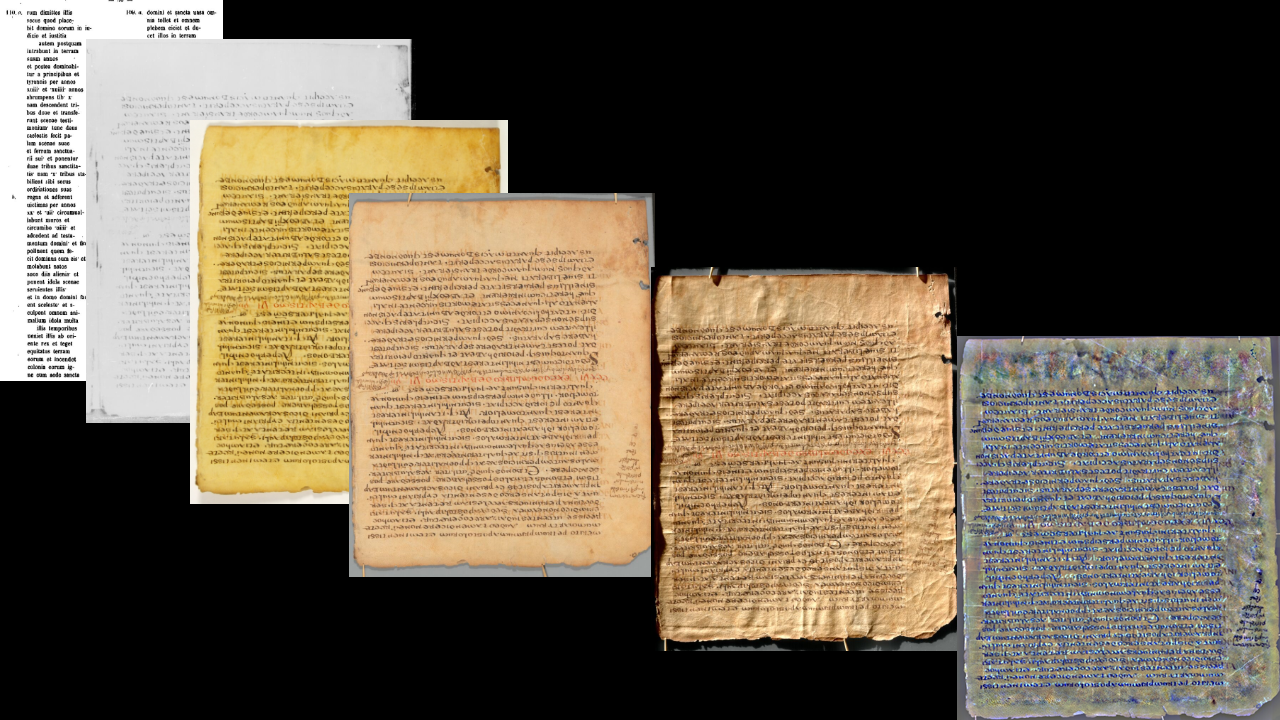

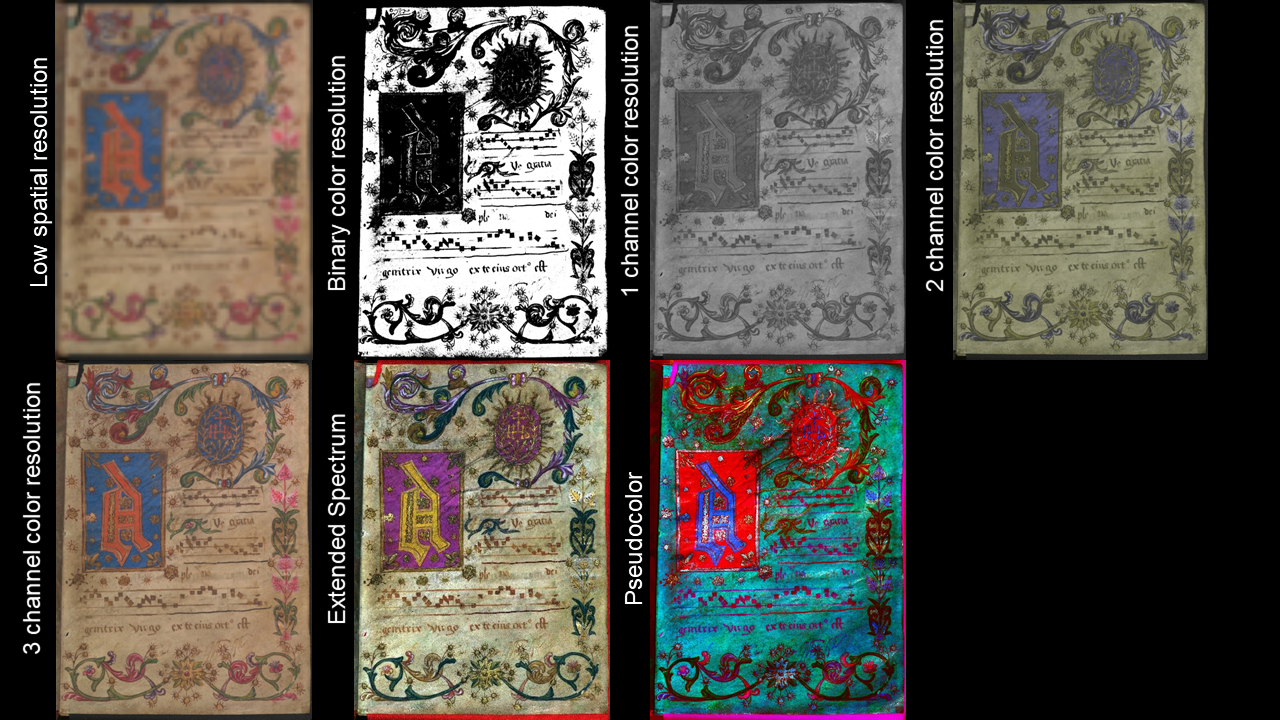

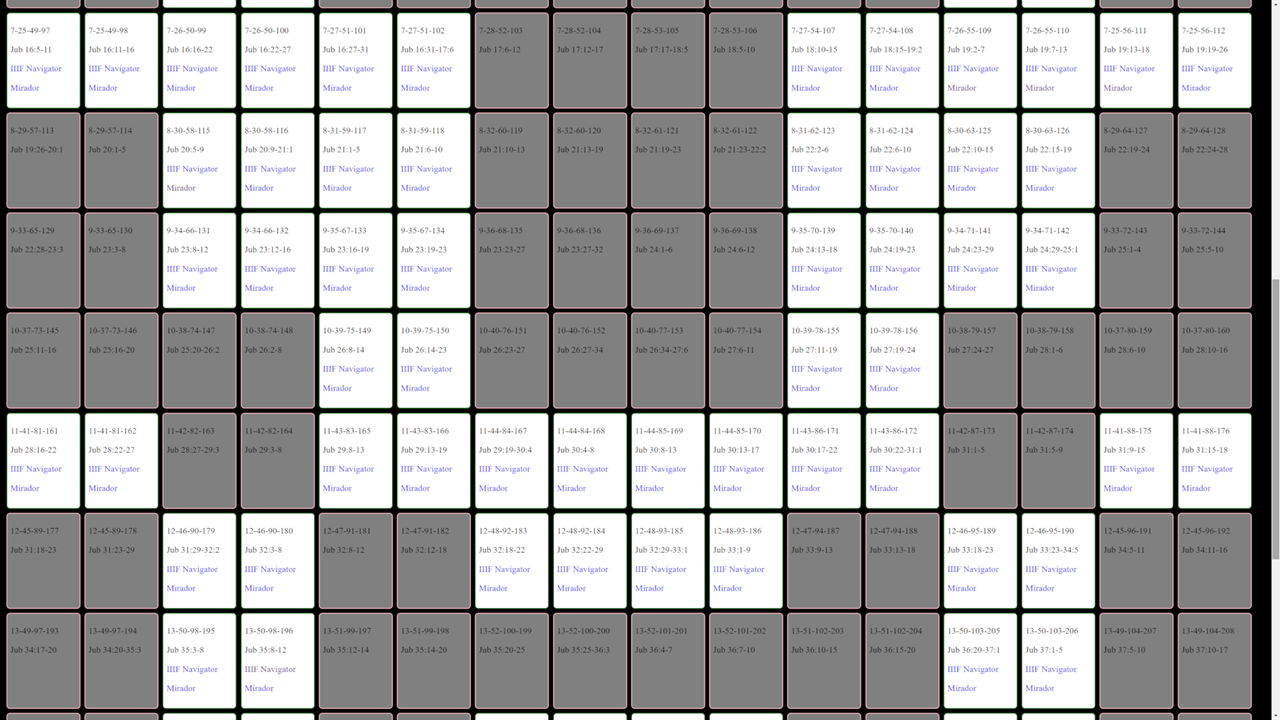

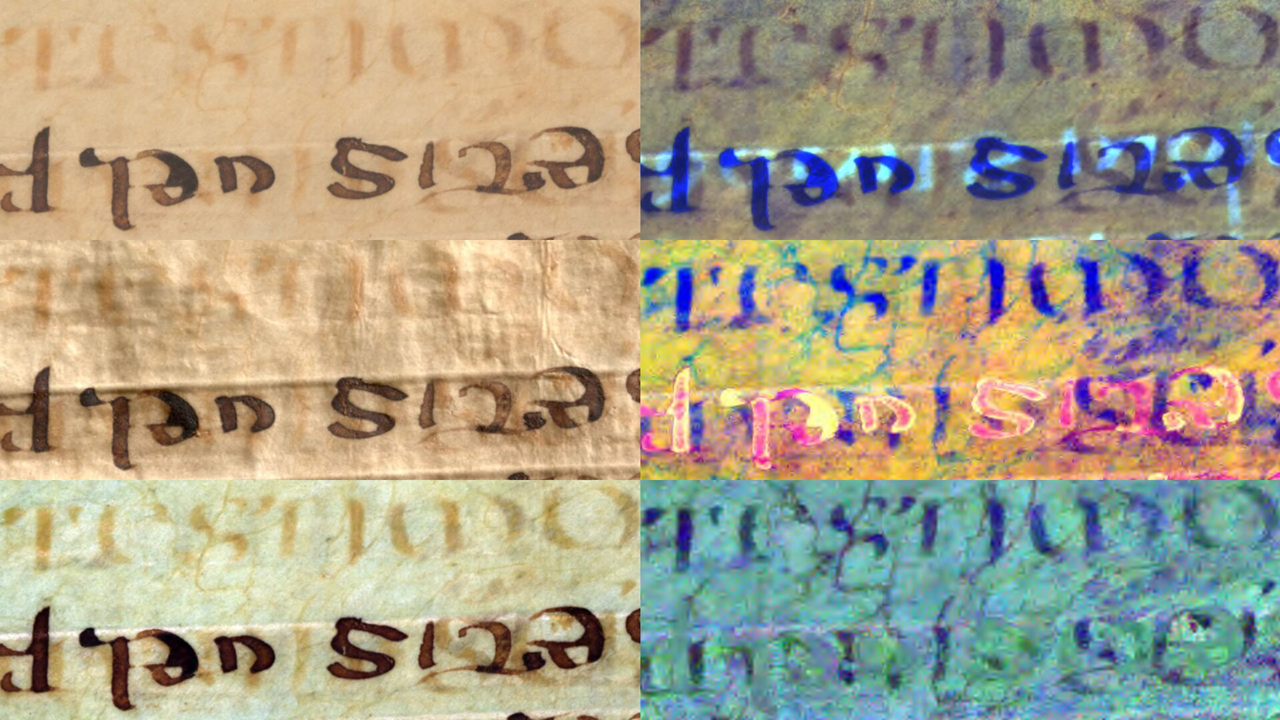

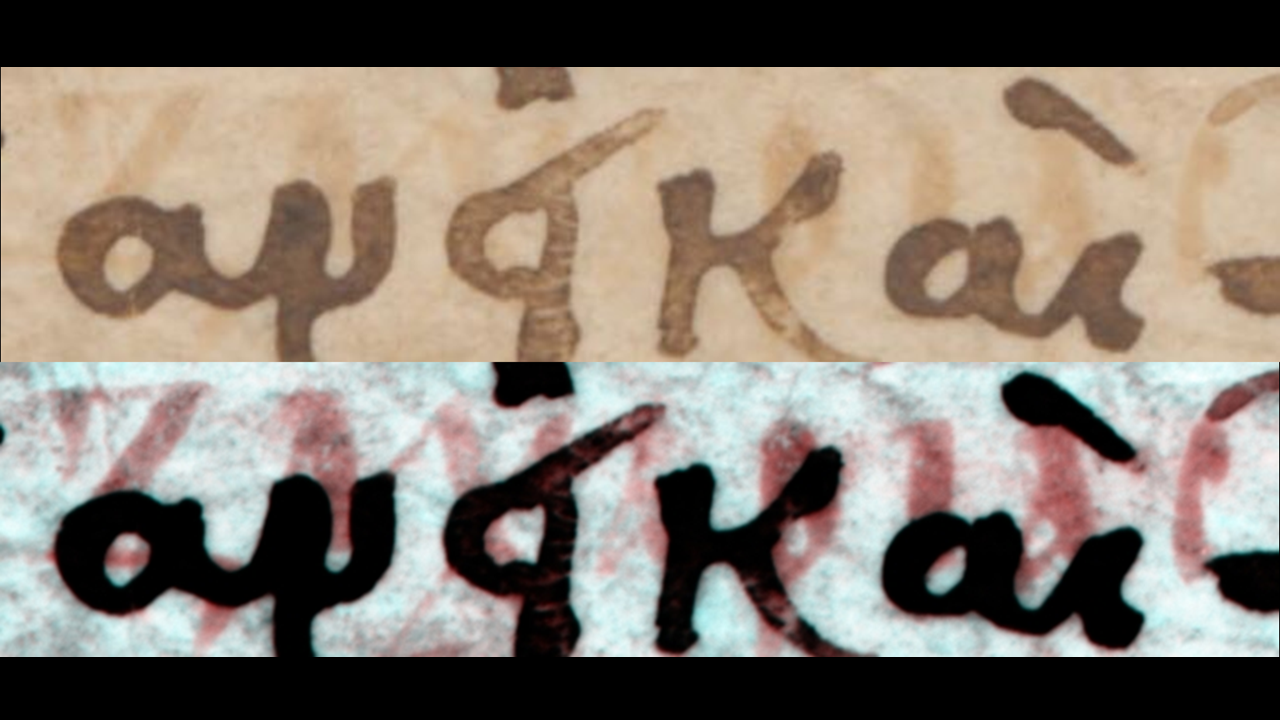

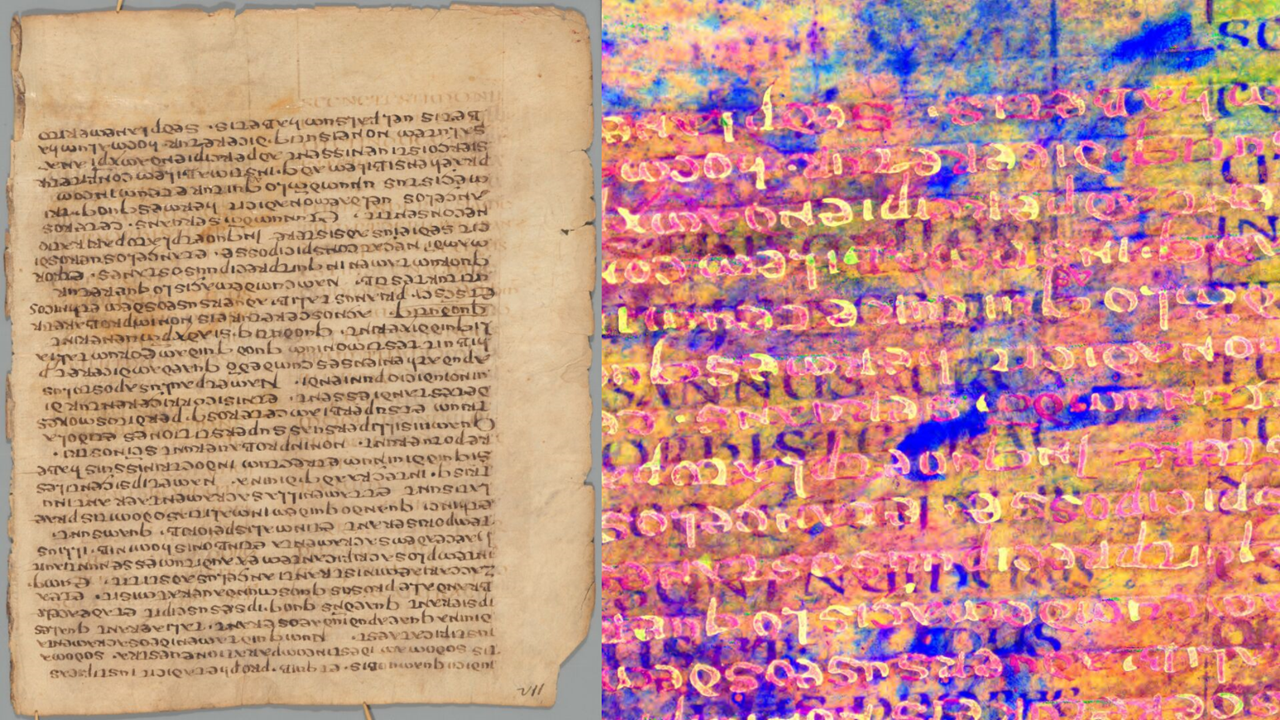

For example, we see here six digital images representing the same page of the Jubilees Palimpsest. One should not speak of the limitations of digital technology when one means the limitations on one mode of digitization. One should not compare a digital simulation to first-hand experience when one means a single instance of the many possibilities of digital simulation. I further propose that selection from among the possible modalities of digital capture and rendering should be based on reflection on the inquiry of humanities scholars, future as well as present.

The new tools to be introduced allow a single unified data set to capture and render information about the color and texture of manuscripts and other artifacts.

Previously, digital multispectral imaging had tremendous impact on our ability to image artifacts both for accuracy and enhancements.

Similarly but separately, Reflectance Transformation Imaging (RTI) had a tremendous impact on our ability to image artifacts for interactivity and enhancements in texture and shininess.

Today, free software, documentation, and training are available for the complete integration of those technologies.

Todd R. Hanneken,

“Guide to Creating Spectral RTI Images.”

The Jubilees Palimpsest Project.

Accessed October 26, 2019.

http://jubilees.stmarytx.edu/spectralrtiguide/.

This combination of Spectral and RTI has a relationship of complementarity, not competition, with laser scanning and photogrammetry for modeling the surface boundaries of an object.

Both the advocates and skeptics of manuscript digitization tend to err in assuming that they know what digitization is, that what they have seen is the extent of what is possible.

Whether we take it as a sign of fundamental misconception or simply convenient shorthand,

we should recognize that it is not quite right to call something a “manuscript viewer,”

when we mean a viewer of digital images of manuscripts.

Similarly, if we say that a manuscript or collection has been digitized, we should be clear that we mean

a certain process or project has been completed,

not that every conceivable piece of information in the manuscript has been converted into a digital format.

The so-called “limits of digitization” are usually not theoretical limits

but practical limitations of the mode of digitization available to the speaker.

My new favorite term for a digital surrogate or facsimile of a manuscript comes from

Liv Ingeborg Lied’s essay in the recent volume of Brill’s Digital Biblical Studies.

Liv Ingeborg Lied,

“Digitization and Manuscripts as Visual Objects: Reflections from a Media Studies Perspective”.

In Ancient Manuscripts in Digital Culture: Visualisation, Data Mining, Communication,

(David Hamidović, et al., eds; Digital Biblical Studies 3; Leiden: Brill, 2019),

pp. 15–29, here 19.

The term “simulation” expresses the right mix of connotations.

First, it emphasizes the otherness of the simulation from that which is simulated.

Second, it has, for me at least, a positive connotation as making accessible that which would otherwise be inaccessible.

Although building cities, flying fighter jets, and racing supercars all exist in the non-digital world,

none of those experiences have been available to me, certainly not as a child.

Indeed, even a real-life pilot could benefit from operating a simulation.

I also like the term “simulation” because it signals a range of possible quality based on the accuracy and precision with which the simulation resembles the simulated.

The flight simulator on my home computer in the mid-80s and a flight simulator used today by the Air Force are both simulators.

Neither is fully equivalent to the simulated experience, and neither represents the other in strengths and weaknesses.

In that way the term “simulation” suits the point at hand.

A digital simulation of a manuscript or other artifact can be more or less capable than another

based on its ability to answer scholarly questions.

One simulation may be better than nothing, but another may be better, and more than one better still. For more discussion of terminology appropriate for digital images of manuscripts, including “facsimile,” “surrogate,” and “avatar,” see Dot Porter, “Is This Your Book? What We Call Digitized Manuscripts and Why It Matters.” Dot Porter Digital. Accessed October 26, 2019. http://www.dotporterdigital.org/is-this-your-book-what-digitization-does-to-manuscripts-and-what-we-can-do-about-it/. There may always be an ineffable “cool factor” of seeing or touching the original, so no simulation will reduce the value of the original. When it comes to objective scholarship, however, the modes of investigation should be explicit and in many cases those modes of investigation can be addressed with the proper mode of digitization. Anyone deciding which mode of digitization is justified or necessary will have to consider time and expense, but should also consider the modes of perception and inquiry that scholars bring to artifacts. With that we turn to examination of scholarly inquiry.

So let’s consider what scholars want from manuscripts and what scholars do with manuscripts.

At one level, basic but not insignificant, scholars want to read text from manuscripts.

For this use case, the manuscript is a text container with information to be extracted.

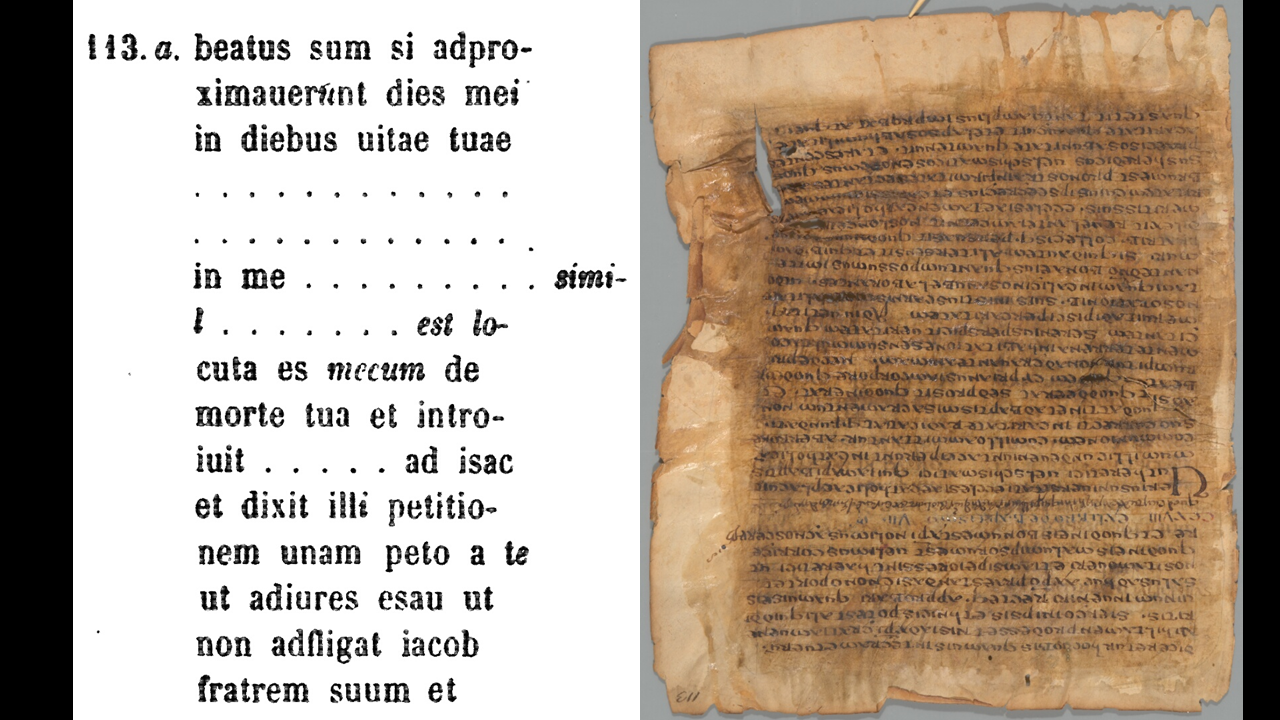

The ideal form of a text container is a published critical edition, and the manuscript serves as a basis for creating or improving the critical edition.

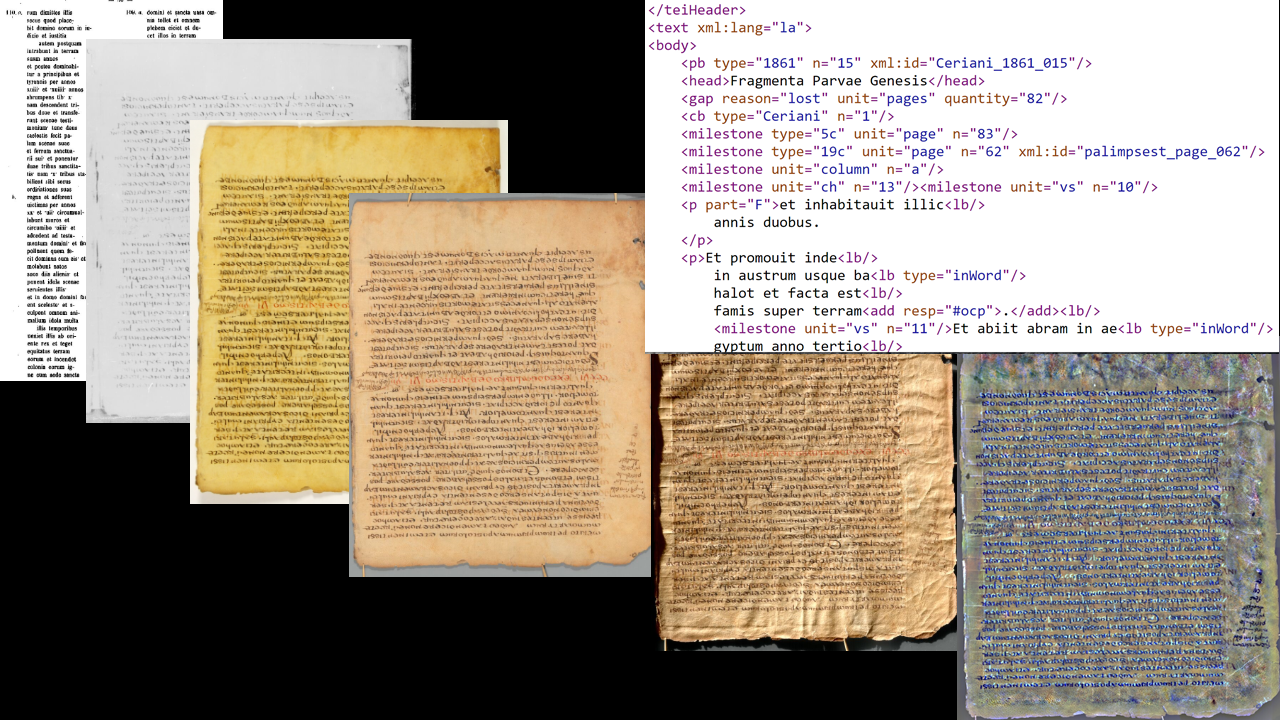

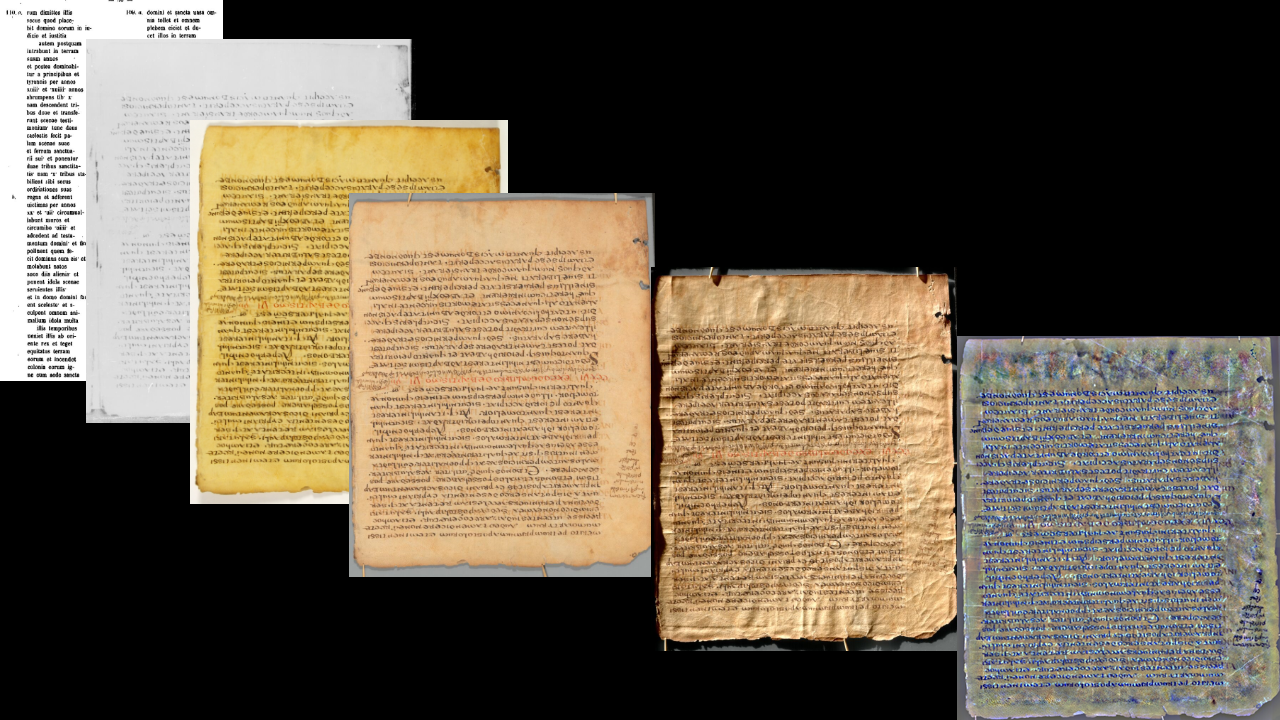

By this standard, the image of the 1861 critical edition on the left is the best, as it is certainly the most easily readable.

From there the next digital step would be to create a digital text from the digital image, preferably in TEI XML.

But even as a text container, the critical edition leaves much to be desired.

The editor boasts that his edition claims only what he saw,

but he is a loose editor by today’s standards.

Antonius Maria Ceriani,

Fragmenta latina evangelii S. Lucae, parvae genesis et assumptionis Mosis, Baruch, threni et epistola Jeremiae versionis syricae Pauli telensis cum notis initio prolegomenon in integram ejusdem versionis editionem

(Monumenta Sacra et Profana ex Codiciubus praesertim Bibliotheca Ambrosiana 1.1;

Milan: Typis et impensis Bibiothecae Ambrosianae,

1861)

p. 12.

He standardizes Latin orthography, such as providing SUM (I am) with an S where the manuscript has C.

This is helpful for a reader who wants clean Latin, but harmful for a scholar who wants to trace the history of Latin pronunciation reflected in scribal error.

He also expands nomina sacra to their full spellings.

Again this is helpful to a reader who understands Latin but not the system of shortening sacred names and titles.

However, this editorial choice obliterated evidence of the theology of the scribe.

When a Christian scribe copied a Jewish text, the scribe had to decide whether SPIRITUS refers to the Holy Spirit, in which case it is condensed to S-P-S,

or refers to a human spirit or simply the wind, in which case it is spelled out S-P-I-R-I-T-U-S.

When copying the Book of Jubilees where it says that Abraham had a patient spirit, the scribe used a nomina sacra, most likely understanding patience as a gift of the Holy Spirit.

This fits with some early Christian understandings of the Holy Spirit, and contradicts others.

If we assume the scribe was an ordinary Christian, this gives us evidence independent of the preserved treatises by major figures.

This research was first presented in a scholarly venue at the annual meeting of the Catholic Biblical Association July 29, 2019.

The paper presented by Vanessa Cypert and Todd Hanneken was titled, “Pneumatology in Early Christian Scribal Practices.”

Yet the biggest single problem with the 1861 edition imaged on the left is that it frequently notes indecipherable or uncertain text.

One would want to look at the manuscript to see if it is possible to improve on the editor’s guesses.

However, that is not possible with the naked eye.

The text copied in the fifth century was erased in the eighth century so the parchment could be reused to copy another text.

Earlier in the nineteenth century a chemical reagent was applied with the intent of making the erased text more legible.

If it succeeded in the short term, it failed in the long term, as no twentieth-century scholar was able to see more than the nineteenth-century editor.

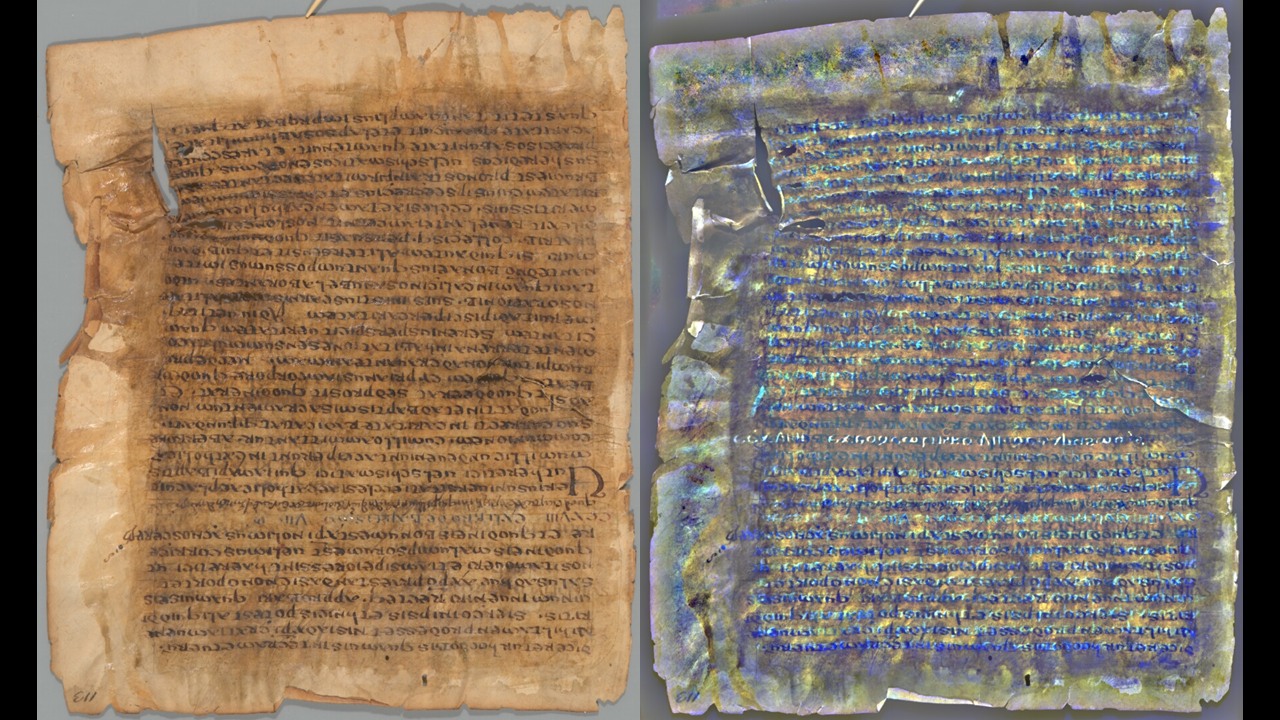

Not until the twenty-first century would digital multispectral imaging show contrasts invisible to the human eye.

To this we shall return.

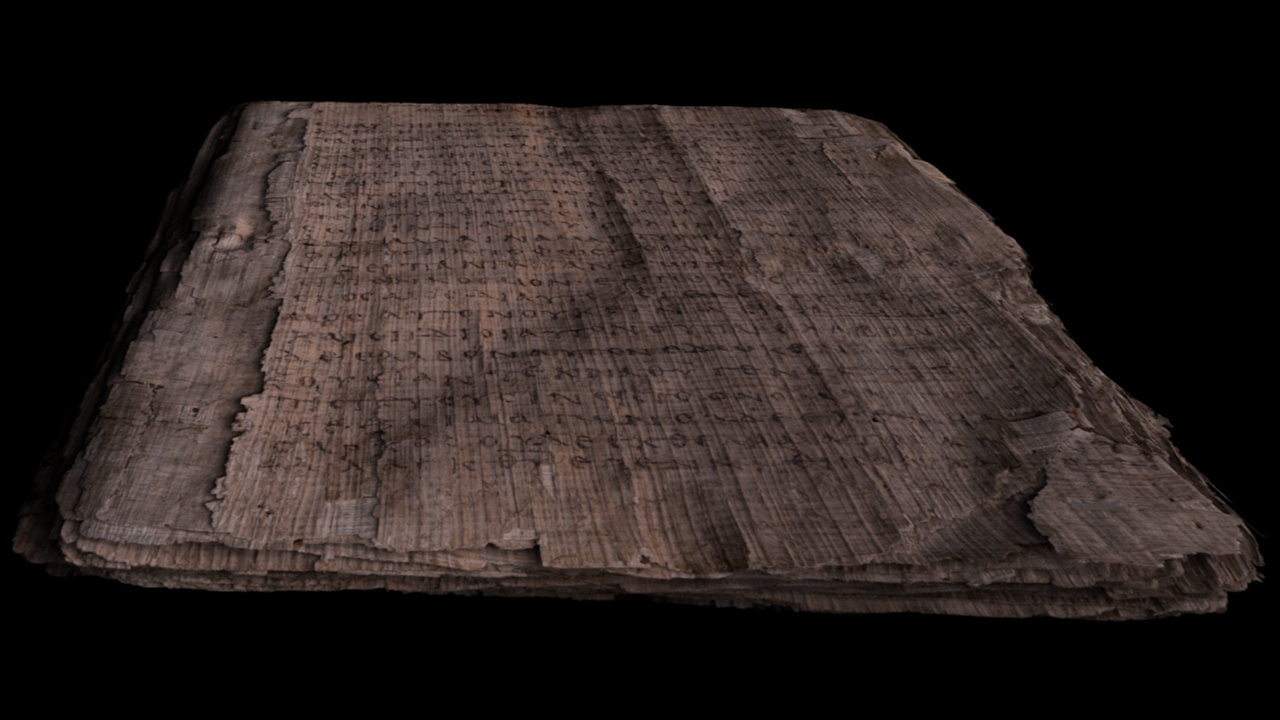

Interest in the manuscript as text container spills over into interest in the manuscript as artifact of scribal culture

when a scholar wishes to reconstruct the extent of the original fifth-century codex, before it was disassembled, erased, and reassembled in the eighth century.

From a literary perspective, one would like to know whether the Latin recension represented a longer or shorter form of the text.

The 1861 editor was able to reconstruct the sequence, but not the number of missing pages.

To answer these questions one would want to identify the hair and flesh sides of the parchment and locate any quire numbers, neither of which is indicated in the 1861 edition.

One would have to correct for a false bifolio, created when two folios were joined by glue.

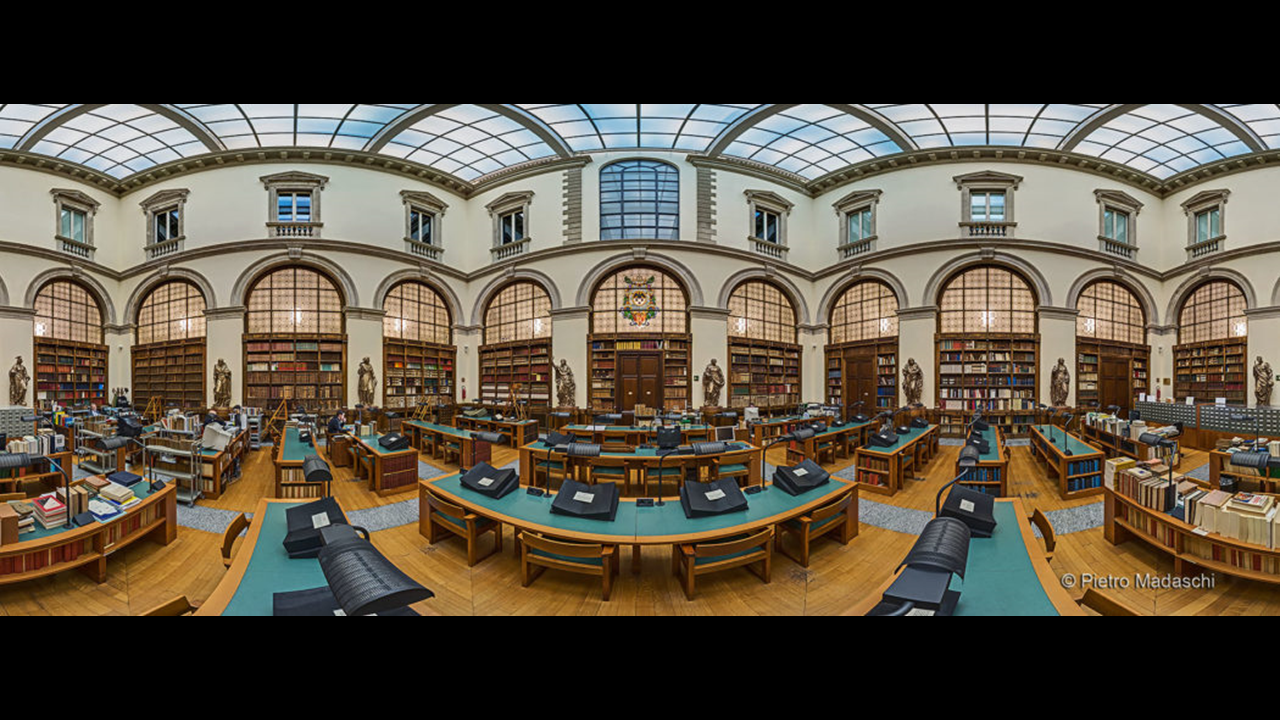

This could be done with first-hand inspection, but first-hand inspection is not available to just anyone, even those able to travel to Milan.

Among digital images, any captured with diffuse lighting would obscure the texture information necessary to answer those scholarly questions.

Other questions of scribal practice and codicology would be important for understanding when and where the codex was produced, and from there its relationship to other codices.

For more on the range of relevant topics, see

Raymond Clemens and Timothy Graham,

Introduction to Manuscript Studies

(Ithaca: Cornell, 2007).

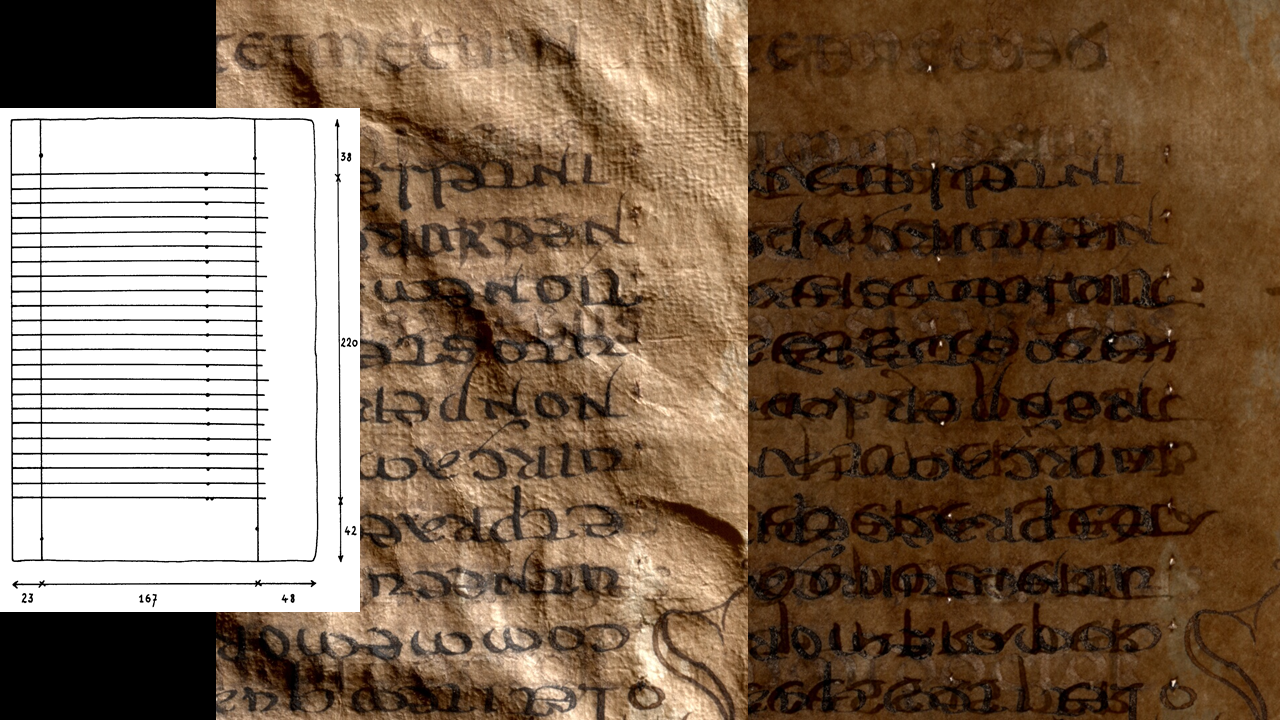

A scholar would examine and measure the scoring lines used to prepare the parchment for writing.

A scholar might create a line drawing for a critical edition.

That information could not be gathered from a simple digital image with diffuse illumination.

Raking illumination would help show the scoring lines.

The pin-pricks would be most evident from light passing through the parchment.

In the reading room this means holding the folio up to the light.

In digital imaging this means transmissive illumination, a backlight.

A conservator would have another set of questions and methods of investigation that may or may not be possible at different levels of digital imaging.

How has the condition of the parchment changed over time?

The scanned microfilm second from the left may be useless for reading, but it does show that some edges were lost in the past few decades.

Good color images over time could indicate the nature and effects of the chemical reagent applied to the parchment.

Unfortunately, the color image captured in 2011 is not a good color image.

It looks fine by itself, but juxtaposed to the fourth image, the differences are striking.

The differences are certainly attributable to the quality of imaging.

The third image was captured by the library staff using a DSLR camera.

The fourth image was captured with a multispectral imaging system carefully calibrated for accuracy of color.

Accurate color measurements are not just about making the simulation more realistic.

They could be useful for tracking the folios over time.

They could be useful for comparing folios across libraries that may have originated in the same time and place.

I could go on with other things a scholar might study, such as dry-point notation, marginalia, the corrosion of ink into or through parchment, and the properties of chemical reagent.

Suffice it to say there is much to study in manuscripts that cannot be investigated using a simple color image alone.

Even basic questions such as distinguishing one ink from another, or a hole, or fly droppings, or some other accretion can be ambiguous in basic images.

The value of going beyond the basics of digitization is no less for artifacts other than manuscripts.

Additional information could be observed specifically to study the reagent, or generally to get a feel of the properties of the manuscript.

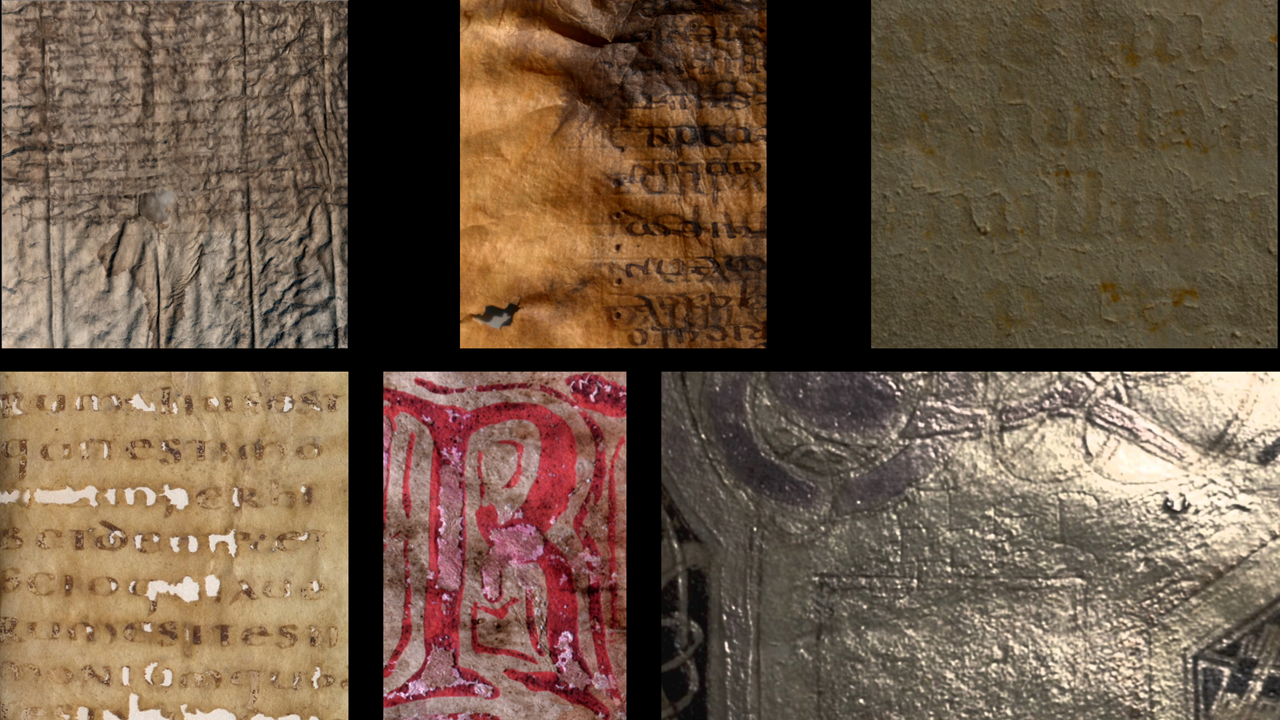

The reagent was thick enough to capture small bubbles, which are visible at very high spatial resolution.

Some of the reagent is also shiny, which imaging science calls specularity.

Diffuse illumination would not show specularity.

Specularity is most intuitively digitized with Reflectance Transformation Imaging (RTI), to which I shall return.

Other scholarly questions that come down to texture and specularity include dry-point notation.

A scribe or reader could make notes by pressing an uninked stylus against the parchment.

These notes would never be seen with simple digital imaging, and even upon first-hand observation could be easily missed.

A conservator or reader would also want to be able to identify the materials on the page.

A minimal digital image can leave a scholar wondering if a mark is ink from one scribe or another, or a hole, or fly droppings, or some other accretion.

Often these judgments are easy with first-hand experience.

We can see the texture of the marking, particularly relative to the parchment.

The right light might show if it has exactly the same color and specularity as ink on the page.

We might resort to an ultraviolet light to show whether it fluoresces in the same way as other materials on the page.

Before evaluating how a digital simulation compares to first-hand experience,

we should examine what happens upon first-hand experience and what scholarly questions are being investigated.

For an excellent examination in the relationship between experience, perception, and epistemology with respect to scholarly examination of manuscripts in real life and in digital simulations see,

William Endres,

“More than Meets the Eye: Going 3D with an Early Medieval Manuscript.”

The Digital Humanities Institute,

Proceedings of the Digital Humanities Congress 2012,

edited by Clare Mills, Michael Pidd, and Esther Ward.

Accessed October 26, 2019.

https://www.dhi.ac.uk/openbook/chapter/dhc2012-endres.

See also,

Bill Endres,

Digitizing Medieval Manuscripts: The St. Chad Gospels, Materiality, Recoveries, and Representation in 2D and 3D

(Medieval Media Cultures.

Leeds: Arc Humanities, 2019).

Another way to think about what scholars want is to picture what scholars do.

What happens when a scholar studies a manuscript in a reading room?

What does first-hand experience look like?

I think much of it falls under the category of movement.

We move our heads, or the light, or the folio hoping to see something a little differently.

Often no one view suffices to answer a question, much less every question.

We require many views, and strongly prefer interactivity and movement.

When people say that there is no substitute for first-hand experience,

I think much of what they mean comes down to interactivity

and what that can tell us about the materials, texture, and specularity of the artifact.

Certainly a simple color photo, even with a good camera and lighting, will compare poorly as a simulation of first-hand experience. Even spectral imaging and RTI should not be thought of as a single-image solution. Deep digitization requires many images presented in such a way that the scholar can interact with the simulation to discover answers to a wide variety of scholarly questions.

To be sure, there will always be a special value to unmediated experience, and no simulation will devalue the possession of the museum or library.

A live performance will always have value that a recording will not replace.

If we focus not on prestige but the ability to answer scholarly questions, a digital simulation can often suffice and indeed surpass first-hand experience.

The truth is that first-hand experience is not always that great.

Even those with the resources to travel and permission touch the artifact will be limited by the capacity of the human eye.

To mention the obvious, not everyone has the privilege of first-hand experience.

First-hand experience is limited by the financial resources of the scholar and by the conservation interests of the artifact.

Our ability to pause and play back our memories of first-hand experience is notoriously limited. Our ability to persuade others based on our memories or even notes from first-hand experience is also limited. A less obvious limitation of first-hand experience is that human perception and the eye in particular is limited. The human eye has receptors for three colors within the range from violet to red, excluding ultraviolet and infrared. The capture of data at higher and wider color resolution, and the processing of that data into images we can see, has been the contribution of multispectral imaging.

In the interest of saving time for discussion, I’d like to assume that you already know as much as you care to know about spectral imaging, or are willing to ask later. What’s important here is that spectral imaging is kind of the opposite of first-hand experience in that it shows what the naked eye cannot see, but typically requires some delay for processing. In the past it has been used mostly for accurate color measurements and for enhancing legibility of text, but could also be useful for material study of a manuscript as more than a text container.

Multispectral imaging can be thought of as the opposite of first-hand experience in as much as it is concerned with overcoming the natural limitations of the human eye

and typically produces results after processing, not in real time.

It can produce color images more accurately and consistently than a conventional camera,

but is more often deemed a necessary level of digitization when information of interest, text or otherwise, is not legible to the human eye.

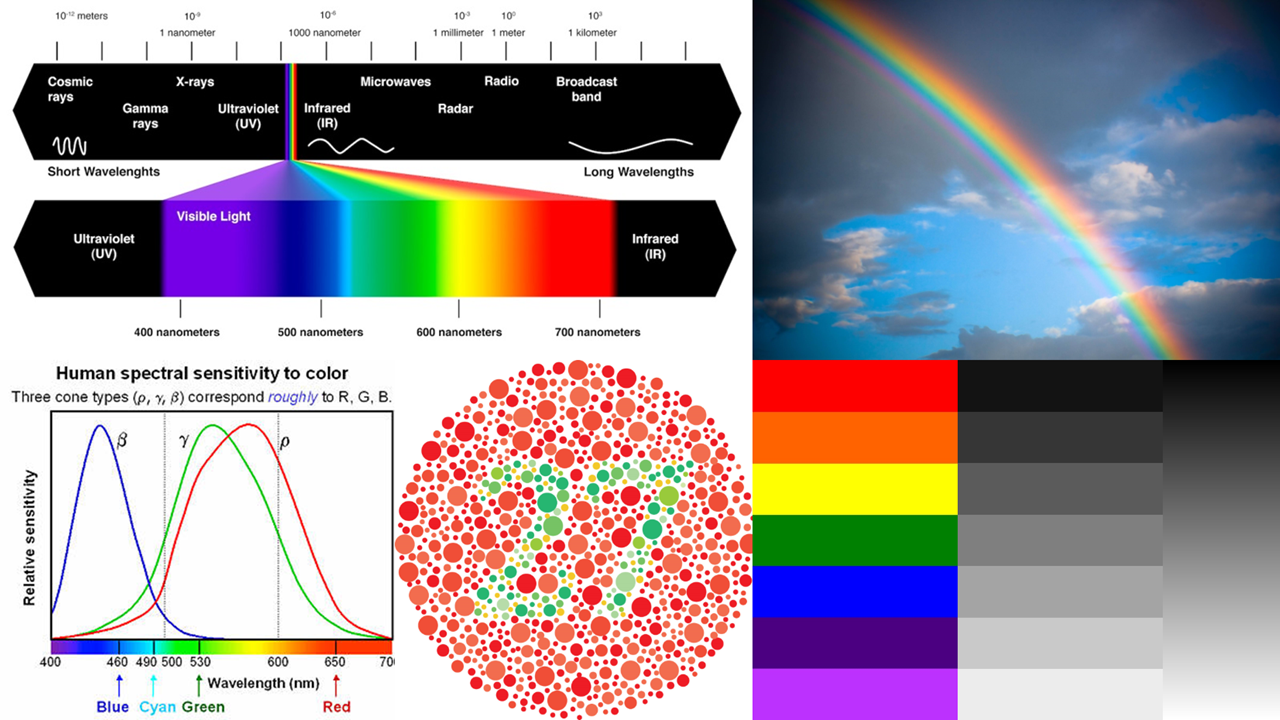

The problem with the human eye is that we have only three color receptors, far fewer than other species and the sixteen or more wavelengths that can be resolved with multispectral imaging.

A human is considered “color blind” at two receptors, but even what we might call “normal” is blind compared to the colors there to be seen.

As you can see from the graph on the lower left, the three receptors are not distributed as evenly as would be ideal.

The limits of our color resolution means we see a rainbow in bands of color, not unlike how we see low spatial resolution as pixelated.

We can see many levels of brightness, so the gradient on the far right appears smooth to us.

If our brightness resolution was as poor as our color resolution the black-white gradient would be banded into seven bars like the rainbow.

The low standards of “normal” color resolution make it possible to capture a color image in one photograph by dividing the pixels into red only, green only, and blue only

(which introduces its own problems in spatial resolution and color accuracy).

The result for scholarship is that we have trouble distinguishing browns.

Brown means all three color receptors are triggered somewhat, but not necessarily with the same spectral fingerprint.

For damaged parchment and other organic materials,

the brown of erased ink and the brown of parchment can look very similar to the human eye while having very different chemical composition and very different spectral fingerprints.

The human eye is also limited in range of color perception.

We see from red to violet, excluding infrared at one end and ultraviolet at the other end.

The benefits of infrared photography were known long before digital imaging.

What changes with digital imaging is that every pixel has a numerical value.

As long as the camera and object remain still through a series of captures, the number for how much infrared light is reflected can be processed in relationship to all the other numbers.

Today it is not uncommon for more than fifty captures to measure each pixel illuminated under different wavelengths and with different filters for fluoresced light.

Some of this processing is relatively simple, but it can also be incredibly advanced, even using machine learning.

We also knew before digital imaging that an ultraviolet lamp could be useful for investigation. An ultraviolet lamp with the human eye shows fluorescence, not reflected ultraviolet. Reflectance is when light bounces off a material. Fluorescence is when light of one wavelength strikes an object and excites it, causing it to emit light of a different wavelength. Spectral imaging is able to measure both reflectance and fluorescence, and at higher color resolution than the eye. Not all ultraviolet has the same effect in causing fluorescence. A shorter or longer wavelength within the ultraviolet range can make a big difference. Finely tuned ultraviolet fluorescence has had dramatic benefits when studying contrasts between organic and inorganic materials.

Another measurement that has proven useful, in addition to reflected and fluoresced light, is transmitted light. This shines light from behind and measures how much light of different wavelengths passes through. In the study of manuscripts this shows holes from damage or the pin pricks from preparation for margins and lines. It also shows thin spots in the parchment. Ink sometimes corrodes the surface of the parchment such that more light passes through.

The strength of spectral imaging is that it shows features not visible to the human eye. That strength also creates a challenge in that we do not know what spectral imaging will show until we try it. Spectral imaging developed mostly as applied to palimpsests and other damaged manuscripts when we knew text was there but couldn’t read it. In some cases, spectral imaging found erased text where humans expected there to be none. It is intriguing to imagine what might be discovered following wider application, beyond manuscripts. One initiative underway is to create more low-cost systems, not so much to replicate the quality of high-end systems but to help identify candidates for more advanced imaging. As cell phones are designed with more cameras, new possibilities for instant spectral sampling are opening up. Cell phones are also offering more and more processing power. Presently spectral imaging requires separate processing. A cell phone spectral sampler might also help predict what methods will be most beneficial. Improved understanding of the chemistry of manuscripts may help as well. High resolution spectroscopy is not about replacing multispectral imaging, but making it more efficient. Right now we cannot reliably predict what methods will be beneficial until after at least some capture and processing has already taken place. This can lead to overkill, but one should also be careful how one defines data as useful. In the past, the ability to recover illegible text was the dominant standard. However, if one imagines conservation and scholarship that approaches a manuscript as more than just a text container, much more data may prove useful. Similarly, processing procedures may be beneficial by metrics other than text recovery. Scholars should not expect spectral imaging to create a single great picture than can be archived and studied to answer every question. Rather, the strength of spectral imaging is its ability to create many images visible to humans showing things otherwise not visible to humans. Making that set of images useful to scholarship requires careful attention to the viewing environments used to study all those images. Viewing environments are outside the scope of the present paper.

Almost everything discussed under spectral imaging ultimately gets at the chemical composition of each pixel captured.

Spectral imaging excels at distinguishing the chemical composition of materials at each pixel captured.

Parchment, different inks, fly droppings, mold, etc. all have different

chemical compositions and therefore different properties of reflectance, fluorescence, and transmission of light.

spectral signatures.

When we can resolve those contrasts at 600 pixels per inch we can see any deliberate, and many not deliberate, additions of materials such as ink.

However, the addition of material is only one of the ways that humans express meaning in durable artifacts.

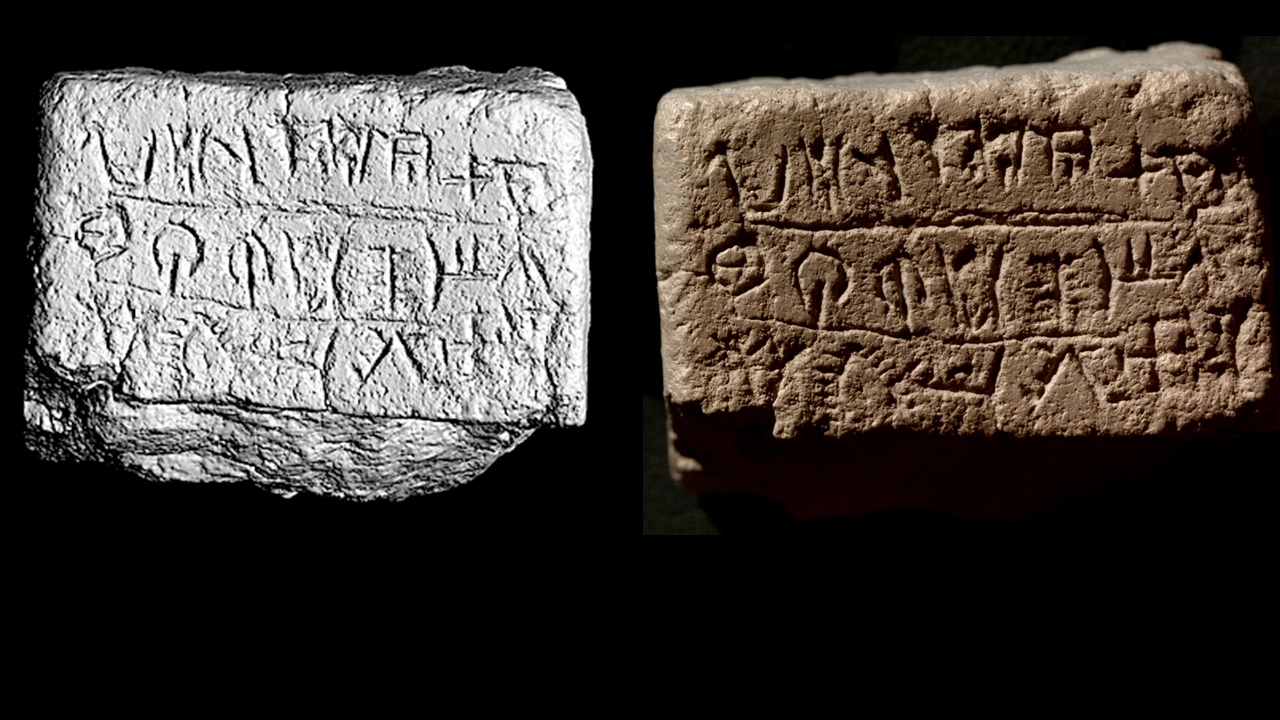

Cuneiform, coins, and inscriptions may have no meaningful variation in the chemical composition of the object.

Rather, the important information is in the texture and shape.

For almost any object the shape and texture can be relevant to scholarly inquiry and understanding the physicality of the object.

Even for the study of a manuscript as a text container,

it has proven possible to read the outline of a letter where now-missing ink once corroded the surface of the parchment.

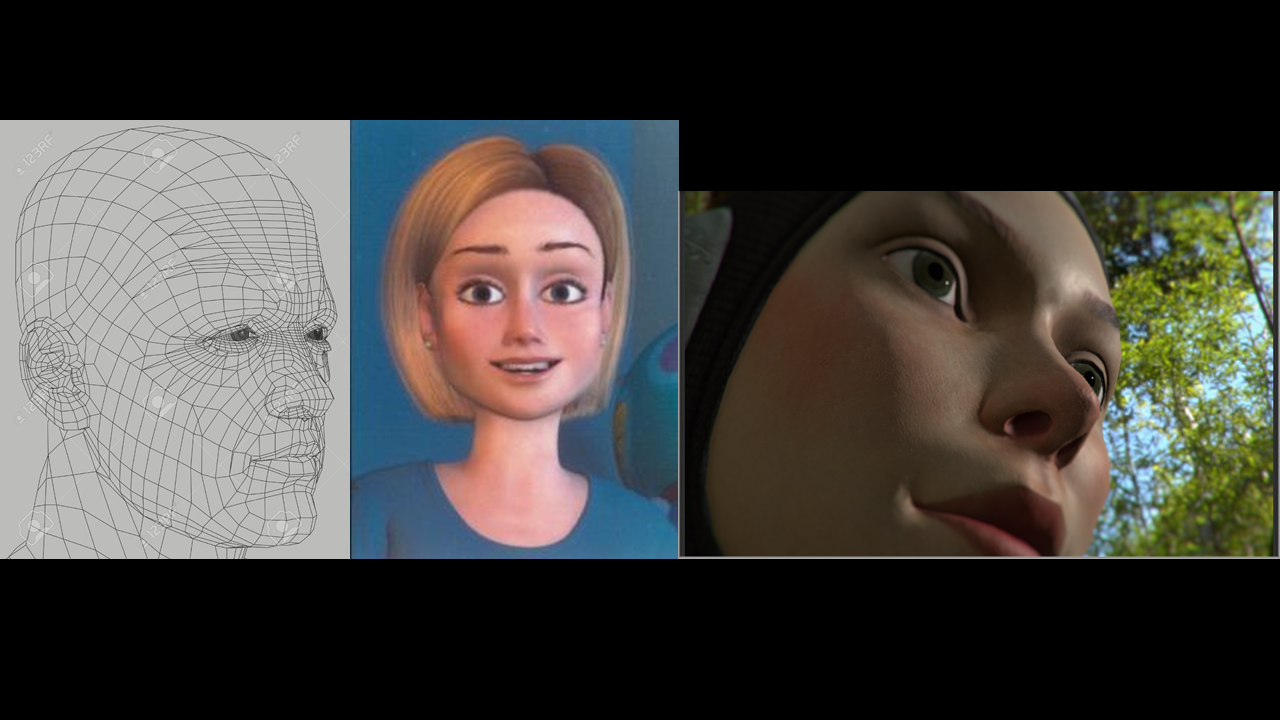

When considering how to digitize objects with texture and shape, it is important to understand the distinction.

Raking illumination gives a static sense of each.

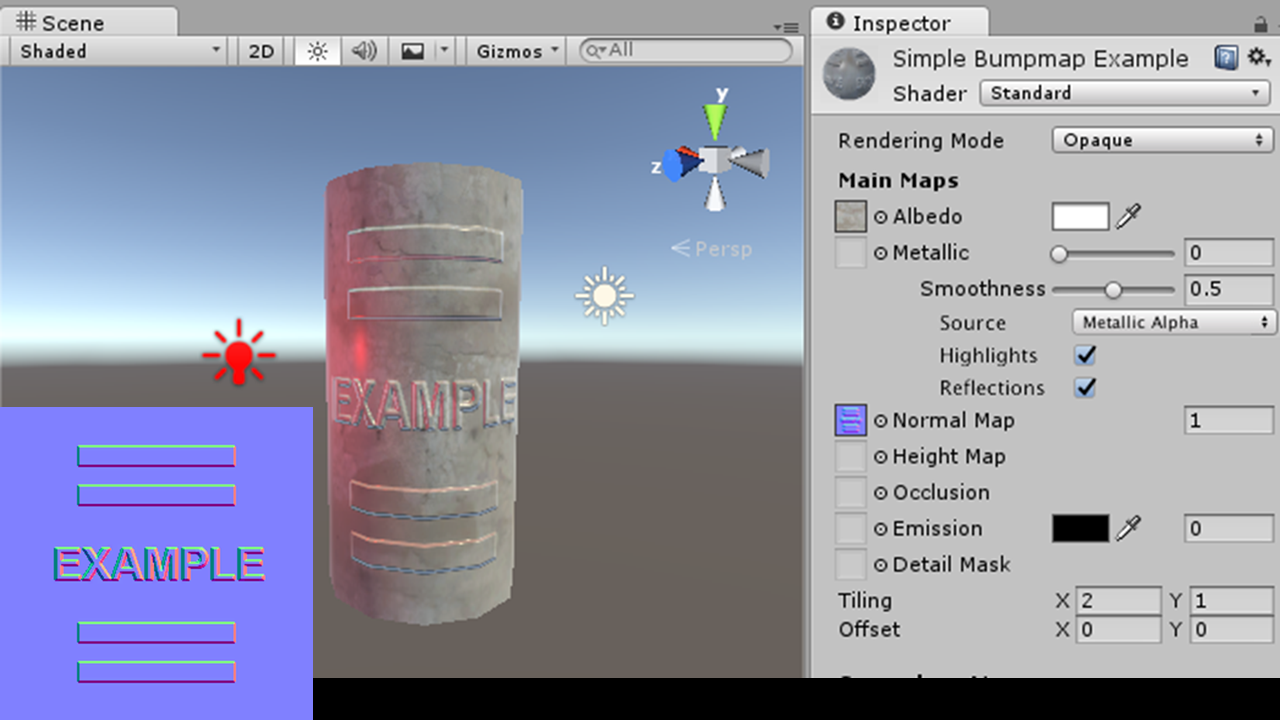

Reflectance Transformation Imaging (RTI) specializes in texture and specularity, but assumes a relatively flat frame.

Laser scanning and photogrammetry specialize in shape but do little for the fine texture and specularity of each of many small surfaces.

A good 3D model has accurately captured information for both shape (that is geometry) and texture (that is surface shaders).

Right now, a viewer based on RTI can simplify the shape of the object, or a viewer based on laser scanning or photogrammetry can simplify the surface shading.

Depending on the object being studied, both may be necessary.

Someday, if not now, all the data will be properly utilized in a single viewer.

Raking illumination means the photographer lights the object from a low angle close to the surface.

This causes the highlights and shadows that allow human observers to perceive texture.

A diffusely illuminated image of an inscription, such as the image of the Amman Citadel Inscription on the left, is unreadable.

We are all accustomed to studying texture from raking light images, but there are a few limitations.

First, we are dependent on the photographer to identify the best angle of illumination.

Even if the photographer succeeds for one region of interest, that one angle is not likely to suffice for all questions a scholar might ask.

The resulting images still rely on the human imagination, which has to interpret a dark spot as either a shadow or a pigment.

Finally, a raking light image lacks the interactivity and enhancements that characterize RTI and laser scanning.

RTI could be thought of as a large collection of raking light images, and at capture that is essentially what happens. Either a literal dome or a virtual dome illuminates an object from fifty or so directions. A virtual dome could be a swinging arc or a handheld flash held at many points equidistant from the object. RTI does not simply store the sum of raking light still captures. Rather, it calculates the coefficients of a mathematical formula that best fit the captured data.

The viewer software can then calculate the brightness of a pixel as a function of light position, not limited to the light positions actually captured. That means non-captured light positions can be extrapolated. The formula can also be modified to enhance specularity and so forth. All digital simulations have the possibility of introducing confusion, and as a scholar I would prefer an argument go back to one of the captured images. However, The ability to change the lighting in a single interactive viewer goes a long way to simulating first-hand experience.

In the interest of time I will have to skip a section on the relationship between RTI and laser scanning.

Suffice it to emphasize that the relationship is complementary,

as true 3D incorporates both shape and surface shaders, including texture and specularity.

I highly recommend catching the presentation of Bill Endres eleven o’clock Friday morning,

“Sublime Complexities and Extensive Possibilities: Strategies for Building an Academic Virtual Reality System.”

Most RTI viewers allow pan, zoom, and manipulation of the light position.

There is one that uses the same data to simulate moving the eye to a lower angle.

This is fairly convincing in as much as the artifact is flat, but fails on steep sides.

RTI can handle fairly deep texture, such as this terracotta figurine, as long as the viewing angle remains the same.

RTI can image multiple sides of an object, but not move smoothly between them.

For an object with complex dimensionality of shape or a very large object, laser scanning and photogrammetry can provide important assistance.

For some objects, laser scanning and RTI may be closely comparable approaches, while for others only one may be viable.

Here we see two images of a very small undeciphered tablet from Cyprus, one based on laser scanning and the other based on RTI.

Laser scanning has the advantage that nothing is ever out of focus, and moving from one side to another, stopping somewhere in between, would be easy.

RTI has the advantage of higher spatial resolution and accurate rendering of color and shininess.

I wish to emphasize, however, that both modes of digitization are fully compatible.

A complete 3D model accounts for both the structure established by laser scanning and the texture and specularity established by RTI.

Laser scanning alone only establishes where the boundary of the object is found, not its color or shininess.

A 3D engine will ultimately think of boundaries as a series of triangles, even if very many triangles with very small surfaces.

If we stopped there we would have what is called a wireframe.

Typically each one of those surfaces will be shaded in some way, either with a solid color or gradient or artificially generated map of texture and specularity.

So far, fully integrating RTI data for texture and specularity into 3D engines and viewers, including virtual reality, is on the cutting edge. Bill Endres is at the forefront of viewing manuscripts in virtual reality environments. Bill Endres, Digitizing Medieval Manuscripts. See also the work of Chantal Stein, Emily Frank, and Sebastian Heath “Blending Computational Imaging Techniques: Experiments in Combining Reflectance Transformation Imaging (RTI) and Photogrammetry in Blender,” https://www.metmuseum.org/about-the-met/conservation-and-scientific-research/projects/rti-symposium/day-2-pm. More information is on the website of one of the authors, http://www.emilybeatricefrank.com/combining-computational-imaging-techniques/. True 3D will not make RTI obsolete, but make it even more important. A simulation of a manuscript page in a 3D viewer that has no or only artificially generated texture and specularity could be misleading to the point of being damaging to scholarly inquiry.

With appropriate foresight of possibilities on the horizon, I wish to close my remarks today with information about what is available today.

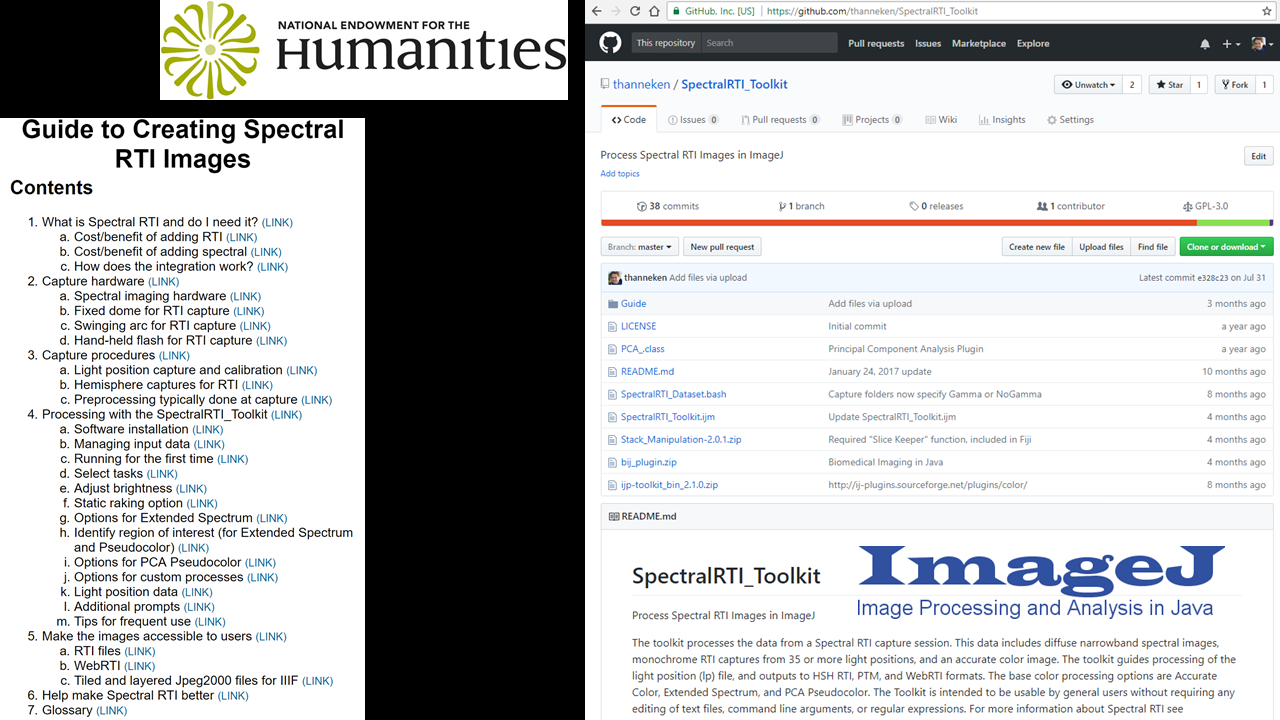

With the support of two grants from the National Endowment for the Humanities, Office of Digital Humanities, my own project was able to develop tools for integrating multispectral imaging and RTI.

We tested different methods of combination and identified the most efficient.

Todd R. Hanneken,

“Integrating Spectral and Reflectance Transformation Imaging Technologies for the Digitization of Manuscripts and Other Cultural Artifacts.”

The Jubilees Palimpsest Project.

Accessed October 26, 2019.

http://jubilees.stmarytx.edu/integrating/.

We tested the data on several objects, including a complete palimpsest, and made the captured data publicly available.

Todd R. Hanneken,

“Ambrosiana Archive.”

The Jubilees Palimpsest Project.

Accessed October 26, 2019.

http://palimpsest.stmarytx.edu/AmbrosianaArchive/.

We developed one way to automate capture of the raking illumination images, and also tested with the inexpensive hand-held flash option.

We developed free software to perform all the processing.

Todd R. Hanneken and Bryan Haberberger,

“SpectralRTI_Toolkit.”

GitHub.

Accessed October 26, 2019.

https://github.com/thanneken/SpectralRTI_Toolkit/.

It utilizes ImageJ, and can be used off the shelf without editing text files or using command line arguments.

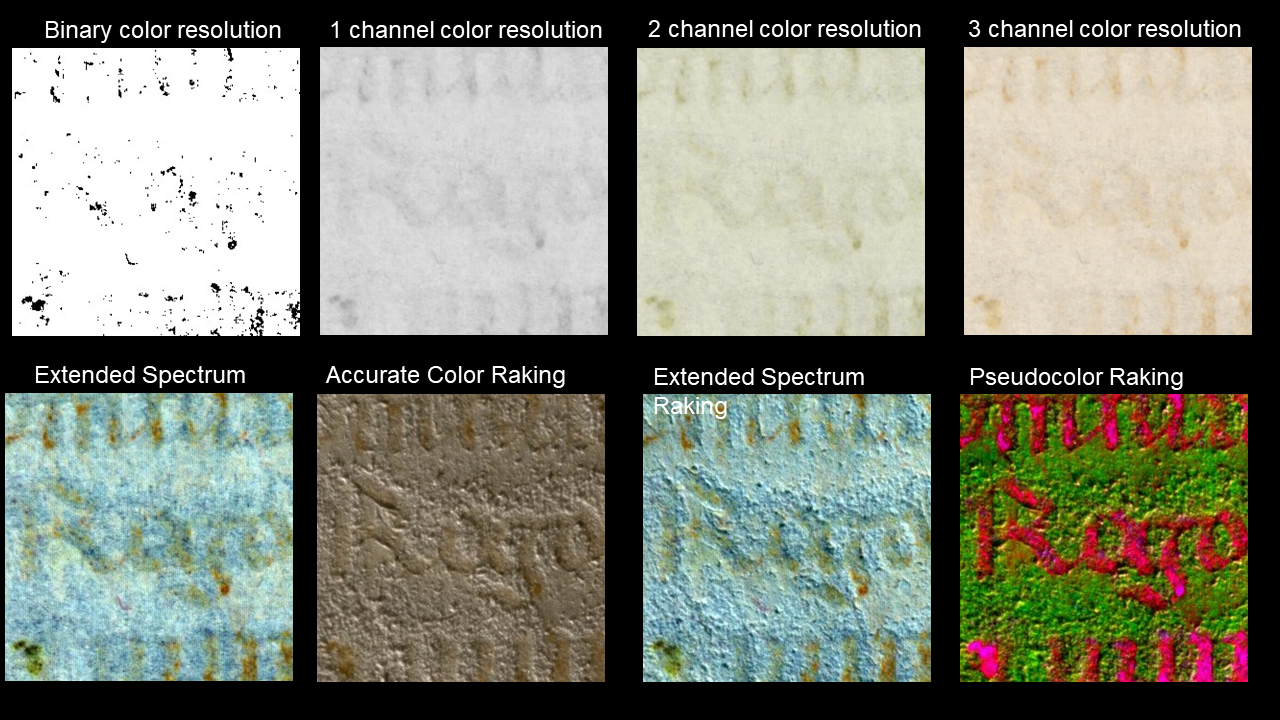

The software itself utilizes accurate color, spectral enhancements, transmissive illumination, principal component analysis of reflectance and fluorescence, and works easily with images processed in other software.

The software outputs files ready for viewing and publication using IIIF, WebRTI, and any RTI viewer.

We prepared detailed documentation that starts with assessing the utility of Spectral RTI, the captured methods, and especially the software for processing.

Todd R. Hanneken,

“Guide to Creating Spectral RTI Images.”

The Jubilees Palimpsest Project.

Accessed October 26, 2019.

http://jubilees.stmarytx.edu/spectralrtiguide/.

All of this is online and free.

The fundamental premise that makes spectral imaging and reflectance transformation imaging compatible is the distinction between luminance and chrominance.

One familiar color space, RGB, is not oriented around that distinction, but others, such as YCbCr, are.

The RGB colorspace has three channels to measure the brightness of red, green, and blue respectively.

The YCbCr color space also uses three channels but dedicates one channel to luminance and the other two to color.

The same data is measured using a different coordinate system.

Consider by analogy how physical space can measured on Cartesian coordinates (x, y, z) or spherical coordinates (r, θ, φ).

The distinction was also important when television went from monochrome to color.

A broadcast could continue to be compatible with older televisions by keeping the luminance channel.

A full color image could be generated from just two additional channels.

One has a higher value where the image is more blue, as in Lucy’s eyes.

Another has a higher value where the image is more red, as in the big red heart.

The integration was conceptually straightforward because spectral imaging and RTI rely on similar requirements that many images are captured without moving the object or camera.

Spectral imaging varies the wavelength of light, while RTI varies the direction of light.

Spectral imaging strives for diffuse illumination to avoid shadows or unevenness in illumination being mistaken for a chemical property of the object imaged.

RTI focuses primarily on shadows and highlights as indicators of texture and specularity, but pays no more attention to color than what is captured by a conventional camera.

Simply put, spectral is concerned with chrominance and not luminance.

RTI is concerned with luminance and not chrominance.

Information from one source can be merged with information from the other source in an appropriate color space and

processed for a complete set of raking images or RTI.

In addition to the freely available software and documentation described above, I offer myself as a resource for sharing knowledge and conversation. I would be happy to speak with anyone about deciding what level of digitization is appropriate for a given set of artifacts or project. Under any circumstance in which spectral and RTI are possible separately, the combination is easier and superior in quality. Even without full RTI, just a few raking images are easy and beneficial. The main limitation is time and expense. For anyone already doing or considering spectral imaging, the addition of RTI or raking is negligible in time and expense. The same cannot be said for adding spectral imaging for one already doing RTI. One can reasonably hope for a dramatic increase in low-cost options. My point is not to disparage simpler forms of digitization as a viable solution for many projects. I do hope, however, that awareness of deeper modes of digitization will refine the conversation about the impact of digitization on humanities scholarship. There may be some intrinsic limitations of all digital simulations, but not all digital simulations are equal in their ability to benefit humanities research and teaching. Thank you.